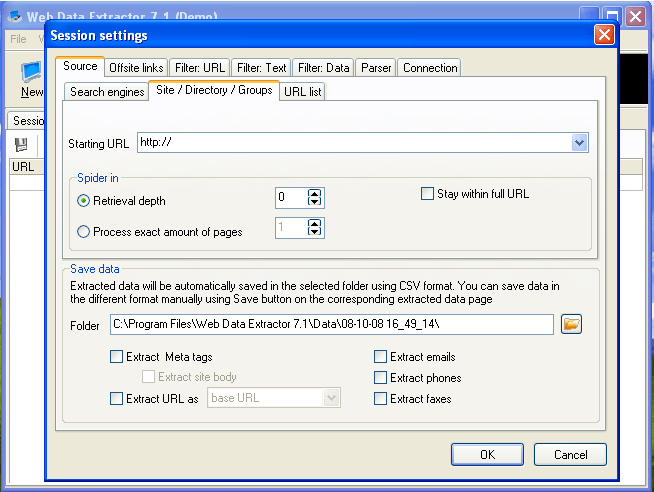

Limit web scraping by number of posts or items and extract all data. I understand it might not be up to you, but I'd strongly suggest trying to cut out the pdf part of the ordering process. Unlimited Reddit web scraper to crawl posts, comments, communities, and users without login. In this article, we will learn how to use PRAW to scrape posts from different subreddits as well as how to get comments from a specific post. Prebuilt robot Setup Browse prebuilt robots for popular use cases and start using them right away. As its name suggests PRAW is a Python wrapper for the Reddit API, which enables you to scrape data from subreddits, create a bot and much more. Monitoring Extract data on a schedule and get notified on changes. In my case I had ~4,000 pdfs from a few years ago, but it sounds like you are working with an ongoing process. Data Extraction Extract specific data from any website in the form of a spreadsheet that fills itself. Web Scraping Services India: We provides professional web data extractor / extraction or website data scraper services at best prices. We first need to identify what type of item we are working with. It ended up working pretty well for me, so if the other options don't work for you it might be worth looking into. Type prefixes table sourced from the Reddit API docs.

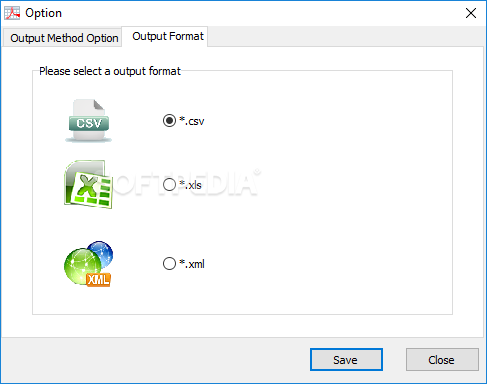

used regex to search the string for the data I needed.used pytesseract to "read" the images and extract the text into a giant string.However, if they're like mine - no tables, different number of pages and the data I needed could be on any of them, and different kinds of pdfs (some were scanned images, some were forms, others were "regular" pdfs) then you might need to get more creative. redditadsR is to help R users to access Reddit Ads digital marketing data via Windsor. By providing a list of subreddits to scrape and a list of keywords to look for, the RDE tool returns all submissions and comments in the comment tree that are relevant to the keywords at hand. This will be much easier if your pdfs are in in tables and in a consistent format. Open your file extractor, extract it with 1234 as the password. The Reddit Data Extractor or RDE, is a command line tool that allows users to extract data from Reddit in the form of organized CSV files. YouTube, Reddit, and more Analyze website links for SEO Extract. I'm pretty beginner with python, so I'm sure there's a better way but I had to deal with something similar a couple months ago. ImportFromWeb imports data from any websites into your spreadsheet using a simple.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed